Introduction: The unavoidable intersection of AI, talent, and ethics

Artificial intelligence (AI) is fundamentally reshaping the landscape of talent acquisition, offering immense opportunities to streamline operations, enhance efficiency, and manage applications at scale. Modern AI tools are now used across the recruitment lifecycle, from targeted advertising and competency assessment to resume screening and background checks. This transformation has long been driven by the promise of objectivity—removing human fatigue and unconscious prejudice from the hiring process.

However, the rapid adoption of automated systems has introduced a critical paradox: the very technology designed to eliminate human prejudice often reproduces, and sometimes amplifies, the historical biases embedded within organizations and society. For organizations committed to diversity, equity, and inclusion (DEI), navigating AI bias is not merely a technical challenge but an essential prerequisite for ethical governance and legal compliance. Successfully leveraging AI requires establishing robust oversight structures that ensure technology serves, rather than subverts, core human values.

Understanding AI bias in recruitment: The origins of systemic discrimination

What is AI bias in recruitment?

AI bias refers to systematic discrimination embedded within machine learning systems that reinforces existing prejudice, stereotyping, and societal discrimination. These AI models operate by identifying patterns and correlations within vast datasets to inform predictions and decisions.

The scale at which this issue manifests is significant. When AI algorithms detect historical patterns of systemic disparities in the training data, their conclusions inevitably reflect those disparities. Because machine learning tools process data at scale—with nearly all Fortune 500 companies using AI screeners—even minute biases in the initial data can lead to widespread, compounding discriminatory outcomes. The paramount legal concern in this domain is not typically intentional discrimination, but rather the concept of disparate impact. Disparate impact occurs when an outwardly neutral policy or selection tool, such as an AI algorithm, unintentionally results in a selection rate that is substantially lower for individuals within a protected category compared to the most selected group. This systemic risk necessitates that organizations adopt proactive monitoring and mitigation strategies.

Key factors contributing to AI bias

AI bias is complex, arising from multiple failure points across the system’s lifecycle.

Biased training data

The most common source of AI bias is the training data used to build the models. Data bias refers specifically to the skewed or unrepresentative nature of the information used to train the AI model. AI models learn by observing patterns in large data sets. If a company uses ten years of historical hiring data where the workforce was predominantly homogeneous or male, the algorithm interprets male dominance as a factor essential for success. This replication of history means that the AI, trained on past discrimination, perpetuates gender or racial inequality when making forward-looking recommendations.

Algorithmic design choices

While data provides the fuel, algorithmic bias defines how the engine runs. Algorithmic bias is a subset of AI bias that occurs when systematic errors or design choices inadvertently introduce or amplify existing biases. Developers may unintentionally introduce bias through the selection of features or parameters used in the model. For example, if an algorithm is instructed to prioritize applicants from prestigious universities, and those institutions historically have non-representative demographics, the algorithm may achieve discriminatory outcomes without explicitly using protected characteristics like race or gender. These proxy variables are often tightly correlated with protected characteristics, leading to the same negative result.

Lack of transparency in AI models

The complexity of modern machine learning, particularly deep learning models, often results in a "black box" where the input data and output decision are clear, but the underlying logic remains opaque. This lack of transparency poses a critical barrier to effective governance and compliance. If HR and compliance teams cannot understand the rationale behind a candidate scoring or rejection, they cannot trace errors, diagnose embedded biases, or demonstrate that the AI tool adheres to legal fairness standards. Opacity transforms bias from a fixable error into an unmanageable systemic risk.

Human error and programming bias

Human bias, or cognitive bias, can subtly infiltrate AI systems at multiple stages. This is often manifested through subjective decisions made by developers during model conceptualization, selection of training data, or through the process of data labeling. Even when the intention is to create an objective system, the unconscious preferences of the team building the technology can be transferred to the model.

The risk inherent in AI adoption is the rapid, wide-scale automation of inequality. Historical hiring data contains bias, which the AI treats as the blueprint for successful prediction. Because AI systems process millions of applications, this initial bias is instantaneously multiplied. Furthermore, if the system is designed to continuously improve itself using its own biased predictions, it becomes locked into a self-perpetuating cycle of discrimination, a phenomenon demonstrated in early high-profile failures. This multiplication effect elevates individual prejudiced decisions into an organizational liability that immediately triggers severe legal scrutiny under disparate impact analysis.

Real-world implications of AI bias in recruitment

The impact of algorithmic bias extends beyond theoretical risk, presenting tangible consequences for individuals, organizational diversity goals, legal standing, and public image.

Case studies and examples of AI bias

One of the most widely cited instances involves Amazon’s gender-biased recruiting tool. Amazon developed an AI system to automate application screening by analyzing CVs submitted over a ten-year period. Since the data was dominated by male applicants, the algorithm learned to systematically downgrade or penalize resumes that included female-associated language or referenced all-women's colleges. Although Amazon’s technical teams attempted to engineer a fix, they ultimately could not make the algorithm gender-neutral and were forced to scrap the tool. This case highlights that complex societal biases cannot be solved merely through quick technological adjustments.

Furthermore, research confirms severe bias in resume screening tools. Studies have shown that AI screeners consistently prefer White-associated names in over 85% of comparisons. The system might downgrade a qualified applicant based on a proxy variable, such as attending a historically Black college, if the training data reflected a historical lack of success for graduates of those institutions within the organization. This practice results in qualified candidates being unfairly rejected based on non-job-related attributes inferred by the algorithm.

Mitigating AI bias in recruitment: A strategic, multi-layered approach

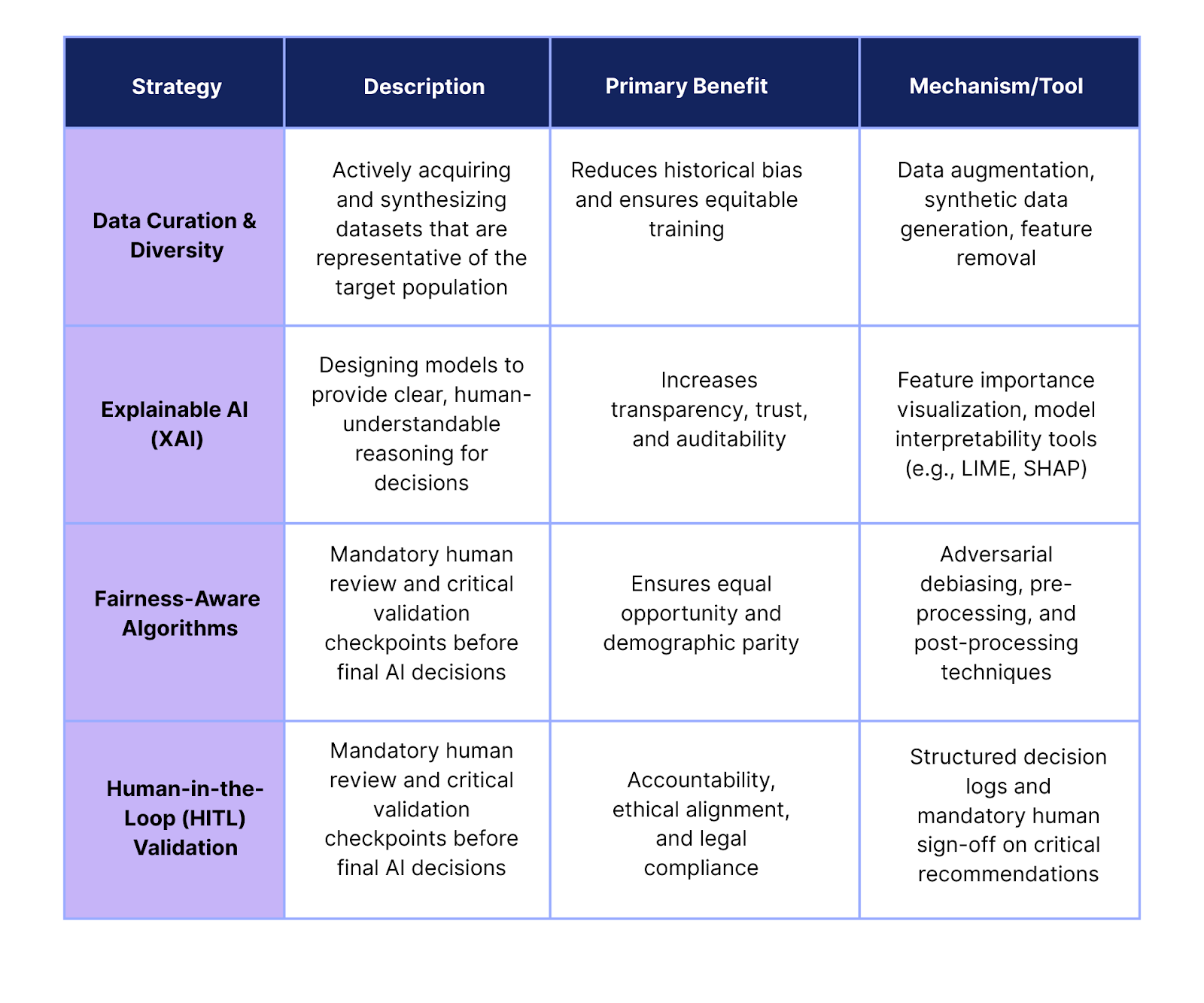

Effective mitigation of AI bias requires a comprehensive strategy encompassing technical debiasing, structural governance, and human process augmentation.

Best practices for identifying and mitigating bias

Regular audits and bias testing

Systematic testing and measurement are non-negotiable components of responsible AI use. Organizations must implement continuous monitoring and regular, independent audits of their AI tools to identify and quantify bias. These audits should evaluate outcomes based on formal fairness metrics, such as demographic parity (equal selection rates across groups) and equal opportunity (equal true positive rates for qualified candidates). Regulatory environments, such as NYC Local Law 144, now explicitly mandate annual independent bias audits for automated employment decision tools (AEDTs).

Diversifying training data

Because the root of many AI bias problems lies in unrepresentative historical data, mitigation must begin with data curation. Organizations must move beyond passively accepting existing data and proactively curate training datasets to be diverse and inclusive, reflecting a broad candidate pool. Technical debiasing techniques can be applied, such as removing or transforming input features that correlate strongly with bias and rebuilding the model (pre-processing debiasing). Data augmentation and synthetic data generation can also be employed to ensure comprehensive coverage across demographic groups.

Explainable AI (XAI) models

Explainable AI (XAI) refers to machine learning models designed to provide human-understandable reasoning for their results, moving decisions away from opaque "black-box" scores. In recruitment, XAI systems should explain the specific qualifications, experiences, or skills that led to a recommendation or ranking.

The adoption of XAI is essential because it facilitates auditability, allowing internal teams and external auditors to verify compliance with legal and ethical standards. XAI helps diagnose bias by surfacing the exact features driving evaluations, enabling technical teams to trace and correct unfair patterns. Tools like IBM’s AI Fairness 360 and Google’s What-If Tool offer visualizations that show which features (e.g., years of experience, speech tempo) drove a particular outcome. This transparency is critical for building trust with candidates and internal stakeholders.

Technological tools to mitigate AI bias

Fairness-aware algorithms

Beyond mitigating existing bias, organizations can deploy fairness-aware algorithms. These algorithms incorporate explicit fairness constraints during training, such as adversarial debiasing, to actively prevent the model from learning discriminatory patterns. This approach often involves slightly compromising pure predictive accuracy to achieve measurable equity, prioritizing social responsibility alongside efficiency.

Bias detection tools and structured assessments

One of the most effective methods for mitigating bias is enforcing consistency and objectivity early in the hiring pipeline. Structured interviewing processes, supported by technology, are proven to significantly reduce the impact of unconscious human bias.

AI-powered platforms that facilitate structured interviews ensure every candidate is asked the same set of predefined, job-competency-based questions and evaluated using standardized criteria. This standardization normalizes the interview process, allowing for equitable comparison of responses. For instance, platforms like the HackerEarth Interview Agent provide objective scoring mechanisms and data analysis, focusing evaluations solely on job-relevant skills and minimizing the influence of subjective preferences. These tools enforce the systematic framework necessary to achieve consistency and fairness, complementing human decision-making with robust data insights.

Human oversight and collaboration

AI + human collaboration (human-in-the-loop, HITL)

The prevailing model for responsible AI deployment is Human-in-the-Loop (HITL), which stresses that human judgment should work alongside AI, particularly at critical decision points. HITL establishes necessary accountability checkpoints where recruiters and hiring managers review and validate AI-generated recommendations before final employment decisions. This process is vital for legal compliance—it is explicitly required under regulations like the EU AI Act—and ensures decisions align with organizational culture and ethical standards. Active involvement by human reviewers allows them to correct individual cases, actively teaching the system to avoid biased patterns in the future, thereby facilitating continuous improvement.

The limitation of passive oversight (the mirror effect)

While HITL is the standard recommendation, recent research indicates a profound limitation: humans often fail to effectively correct AI bias. Studies have shown that individuals working with moderately biased AI frequently mirror the AI’s preferences, adopting and endorsing the machine’s inequitable choices rather than challenging them. In some cases of severe bias, human decisions were only slightly less biased than the AI recommendations.

This phenomenon, sometimes referred to as automation bias, confirms that simply having a human "in the loop" is insufficient. Humans tend to defer to the authority or presumed objectivity of the machine, losing their critical thinking ability when interacting with AI recommendations. Therefore, organizations must move beyond passive oversight to implement rigorous validation checkpoints where HR personnel are specifically trained in AI ethics and mandated to critically engage with the AI’s explanations. They must require auditable, XAI-supported evidence for high-risk decisions, ensuring they are actively challenging potential biases, not just rubber-stamping AI output.

A structured framework is necessary to contextualize the relationship between technical tools and governance processes:

Legal and ethical implications of AI bias: Compliance and governance

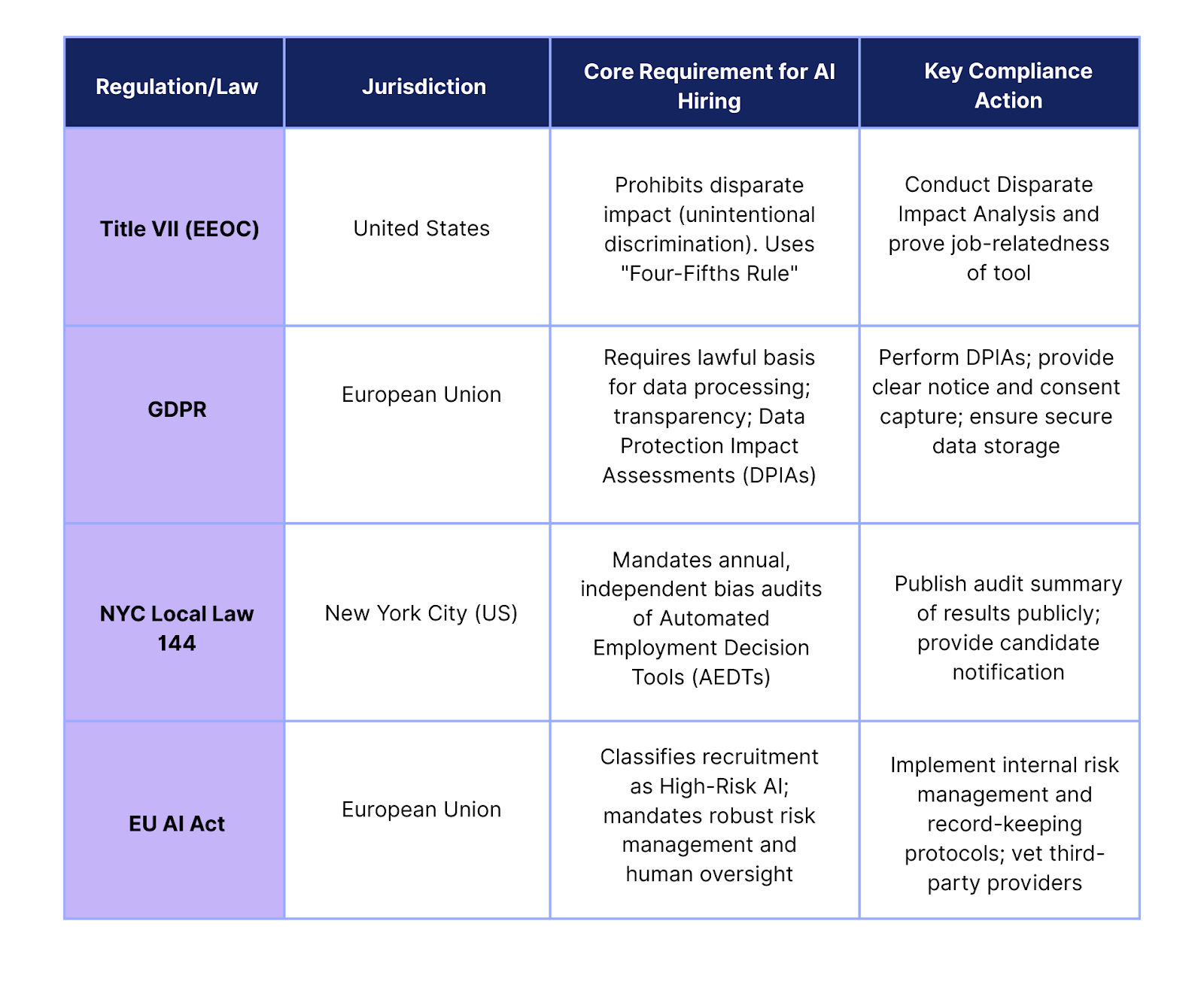

The deployment of AI in recruitment is now highly regulated, requiring compliance with a complex web of anti-discrimination, data protection, and AI-specific laws across multiple jurisdictions.

Legal frameworks and compliance requirements

EEOC and anti-discrimination laws

In the United States, existing anti-discrimination laws govern the use of AI tools. Employers must strictly adhere to the EEOC’s guidance on disparate impact. The risk profile is high, as an employer may be liable for unintentional discrimination if an AI-driven selection procedure screens out a protected group at a statistically significant rate, regardless of the vendor’s claims. Compliance necessitates continuous monitoring and validation that the tool is strictly job-related and consistent with business necessity.

GDPR and data protection laws

The General Data Protection Regulation (GDPR) establishes stringent requirements for processing personal data in the EU, impacting AI recruitment tools globally. High-risk data processing, such as automated employment decisions, generally requires a Data Protection Impact Assessment (DPIA). Organizations must ensure a lawful basis for processing, provide clear notice to candidates that AI is involved, and maintain records of how decisions are made. Audits conducted by regulatory bodies have revealed concerns over AI tools collecting excessive personal information, sometimes scraping and combining data from millions of social media profiles, often without the candidate's knowledge or a lawful basis.

Global compliance map: Extraterritorial reach

Global enterprises must navigate multiple jurisdictional requirements, many of which have extraterritorial reach:

- NYC Local Law 144: This law requires annual, independent, and impartial bias audits for any Automated Employment Decision Tool (AEDT) used to evaluate candidates residing in New York City. Organizations must publicly publish a summary of the audit results and provide candidates with notice of the tool’s use. Failure to comply results in rapid fine escalation.

- EU AI Act: This landmark regulation classifies AI systems used in recruitment and evaluation for promotion as "High-Risk AI." This applies extraterritorially, meaning US employers using AI-enabled screening tools for roles open to EU candidates must comply with its strict requirements for risk management, technical robustness, transparency, and human oversight.

Ethical considerations for AI in recruitment

Ethical AI design

Ethical governance requires more than legal compliance; it demands proactive adherence to principles like Fairness, Accountability, and Transparency (FAIT). Organizations must establish clear, top-down leadership commitment to ethical AI, allocating resources for proper implementation, continuous monitoring, and training. The framework must define acceptable and prohibited uses of AI, ensuring systems evaluate candidates solely on job-relevant criteria without discriminating based on protected characteristics.

Third-party audits

Independent, third-party audits serve as a critical mechanism for ensuring the ethical and compliant design of AI systems. These audits verify that AI models are designed without bias and that data practices adhere to ethical and legal standards, particularly regarding data minimization. For example, auditors check that tools are not inferring sensitive protected characteristics (like ethnicity or gender) from proxies, which compromises effective bias monitoring and often breaches data protection principles.

Effective AI governance cannot be confined to technical teams or HR. AI bias is a complex, socio-technical failure with immediate legal consequences across multiple jurisdictions. Mitigation requires blending deep technical expertise (data science) with strategic context (HR policy and law). Therefore, robust governance mandates the establishment of a cross-functional AI Governance Committee. This committee, including representatives from HR, Legal, Data Protection, and IT, must be tasked with setting policies, approving new tools, monitoring compliance, and ensuring transparent risk management across the organization. This integrated approach is the structural bridge connecting ethical intent with responsible implementation.

Future of AI in recruitment: Proactive governance and training

The trajectory of AI in recruitment suggests a future defined by rigorous standards and sophisticated collaboration between humans and machines.

Emerging trends in AI and recruitment

AI + human collaboration

The consensus among talent leaders is that AI's primary role is augmentation—serving as an enabler rather than a replacement for human recruiters. By automating repetitive screening and data analysis, AI frees human professionals to focus on qualitative judgments, such as assessing cultural fit, long-term potential, and strategic alignment, which remain fundamentally human processes. This intelligent collaboration is crucial for delivering speed, quality, and an engaging candidate experience.

Fairer AI systems

Driven by regulatory pressure and ethical concerns, there is a clear trend toward the development of fairness-aware AI systems. Future tools will increasingly be designed to optimize for measurable equity metrics, incorporating algorithmic strategies that actively work to reduce disparate impact. This involves continuous iteration and a commitment to refining AI to be inherently more inclusive and less biased than the historical data it learns from.

Preparing for the future

Proactive ethical AI frameworks

Organizations must proactively establish governance structures today to manage tomorrow’s complexity. This involves several fundamental steps: inventorying every AI tool in use, defining clear accountability and leadership roles, and updating AI policies to document acceptable usage, required oversight, and rigorous vendor standards. A comprehensive governance plan must also address the candidate experience, providing clarity on how and when AI is used and establishing guidelines for candidates' use of AI during the application process to ensure fairness throughout.

Training HR teams on AI ethics

Training is the cornerstone of building a culture of responsible AI. Mandatory education for HR professionals, in-house counsel, and leadership teams must cover core topics such as AI governance, bias detection and mitigation, transparency requirements, and the accountability frameworks necessary to operationalize ethical AI. Furthermore, HR teams require upskilling in data literacy and change management to interpret AI-driven insights accurately. This specialized training is essential for developing the critical ability to challenge and validate potentially biased AI recommendations, counteracting the observed human tendency to passively mirror machine bias.

Take action now: Ensure fair and transparent recruitment with HackerEarth

Mitigating AI bias is the single most critical risk management challenge facing modern talent acquisition. It demands a sophisticated, strategic response that integrates technological solutions, rigorous legal compliance, and human-centered governance. Proactive implementation of these measures safeguards not only organizational integrity but also ensures future competitiveness by securing access to a diverse and qualified talent pool.

Implementing continuous auditing, adopting Explainable AI, and integrating mandatory human validation checkpoints are vital first steps toward building a robust, ethical hiring process.

Start your journey to fair recruitment today with HackerEarth’s AI-driven hiring solutions. Our Interview Agent minimizes both unconscious human bias and algorithmic risk by enforcing consistency and objective, skill-based assessment through structured interview guides and standardized scoring. Ensure diversity and transparency in your hiring process. Request a demo today!

Frequently asked questions (FAQs)

How can AI reduce hiring bias in recruitment?

AI can reduce hiring bias by enforcing objectivity and consistency, which human interviewers often struggle to maintain. AI tools can standardize questioning, mask candidate-identifying information (anonymized screening), and use objective scoring based only on job-relevant competencies, thereby mitigating the effects of subtle, unconscious human biases. Furthermore, fairness-aware algorithms can be deployed to actively adjust selection criteria to achieve demographic parity.

What is AI bias in recruitment, and how does it occur?

AI bias in recruitment is systematic discrimination embedded within machine learning models that reinforces existing societal biases. It primarily occurs through two mechanisms: data bias, where historical hiring data is skewed and unrepresentative (e.g., dominated by one gender); and algorithmic bias, where design choices inadvertently amplify these biases or use proxy variables that correlate with protected characteristics.

How can organizations detect and address AI bias in hiring?

Organizations detect bias by performing regular, systematic audits and bias testing, often required by law. Addressing bias involves multiple strategies: diversifying training data, employing fairness-aware algorithms, and implementing Explainable AI (XAI) to ensure transparency in decision-making. Continuous monitoring after deployment is essential to catch emerging biases.

What are the legal implications of AI bias in recruitment?

The primary legal implication is liability for disparate impact under anti-discrimination laws (e.g., Title VII, EEOC guidelines). Organizations face exposure to high financial penalties, particularly under specific local laws like NYC Local Law 144. Additionally, data privacy laws like GDPR mandate transparency, accountability, and the performance of DPIAs for high-risk AI tools.

Can AI help improve fairness and diversity in recruitment?

Yes, AI has the potential to improve fairness, but only when paired with intentional ethical governance. By enforcing consistency, removing subjective filters, and focusing on skill-based evaluation using tools like structured interviews, AI can dismantle historical biases that may have previously gone unseen in manual processes. However, this requires constant human oversight and a commitment to utilizing fairness-aware design principles.

What are the best practices for mitigating AI bias in recruitment?

Best practices include: establishing a cross-functional AI Governance Committee; mandating contractual vendor requirements for bias testing; implementing Explainable AI (XAI) to ensure auditable decisions; requiring mandatory human critical validation checkpoints (Human-in-the-Loop) ; and providing ongoing ethical training for HR teams to challenge and correct AI outputs.